Your paid signup has a 60% drop-off. You know it. Your team knows it. Someone suggests changing the button color. Someone else wants to rewrite the entire copy. Your designer has a completely different theory.

So what do you do? You debate it for three weeks, pick the loudest opinion in the room, and ship it.

Two months later: nothing changed. Or worse, things got slightly worse, but no one noticed because you already moved on to the next gut-feel redesign.

That's the AB testing problem most SaaS teams don't talk about. It's not that they don't run experiments. It's that they run them the wrong way, on the wrong things, with no clear hypothesis, and then wonder why their conversion rate optimization program isn't moving the needle.

The companies that grow fast, companies like Slack, HubSpot, and Intercom, don't treat AB testing as a one-off tactic. They treat it as infrastructure. And that distinction is everything.

Expected Results

- Your trial-to-paid conversion rate improves because decisions are based on real user behavior, not the loudest opinion in the room.

- Onboarding drop-off decreases as you identify and fix actual friction points, not cosmetic ones.

- Pricing pages, upgrade nudges, and cancel flows do more revenue work without increasing CAC.

- Teams running structured AB testing programs see an average 18% lift in conversion within six months.

- Companies running 10 or more tests per month grow revenue 2.1x faster than those running just two.

- You build institutional knowledge test by test, so each new experiment starts from a higher baseline instead of repeating mistakes the team has already made.

What A/B Testing Really Means for SaaS Companies?

Your A/B tests do not fail because of bad ideas. They fail because the wrong people were in the test.

When a first-time visitor and a three-year customer land in the same variant, the results get averaged together and you ship based on that. The data looked clean. The audience was not.

This is where AI-powered segmented experiments change the approach. Instead of running one test across your entire user base, you run it on a specific segment, say, trial users who hit your pricing page but did not upgrade. The AI identifies who belongs in that experiment based on live behavioral signals, not a static list you built last week. You get a result that actually reflects how a specific type of user responds, not an averaged-out signal muddied by people who had no business being in the test.

And when a variant wins, you do not ship it to everyone. You ship it to the segment it was built for. A messaging change that works for at-risk churners may do nothing, or worse, for users who are three days into a trial. The winner is only a winner in context.

Split Testing vs. Multivariate Testing

| AB Testing (Split Testing) | Multivariate Testing (MVT) | |

|---|---|---|

| What it tests | One variable at a time | Multiple variables simultaneously |

| What you learn | Exactly what caused the result | Which combination performs best |

| Traffic required | 1,000-5,000 users per variant | 50,000+ visitors per page |

| Best for | Most SaaS teams and use cases | High-traffic pages like the homepage or pricing |

| Risk | Low. Clean, causal data | High. Underpowered tests produce noise |

| When to use | Always start here | Only once you have the traffic to support it |

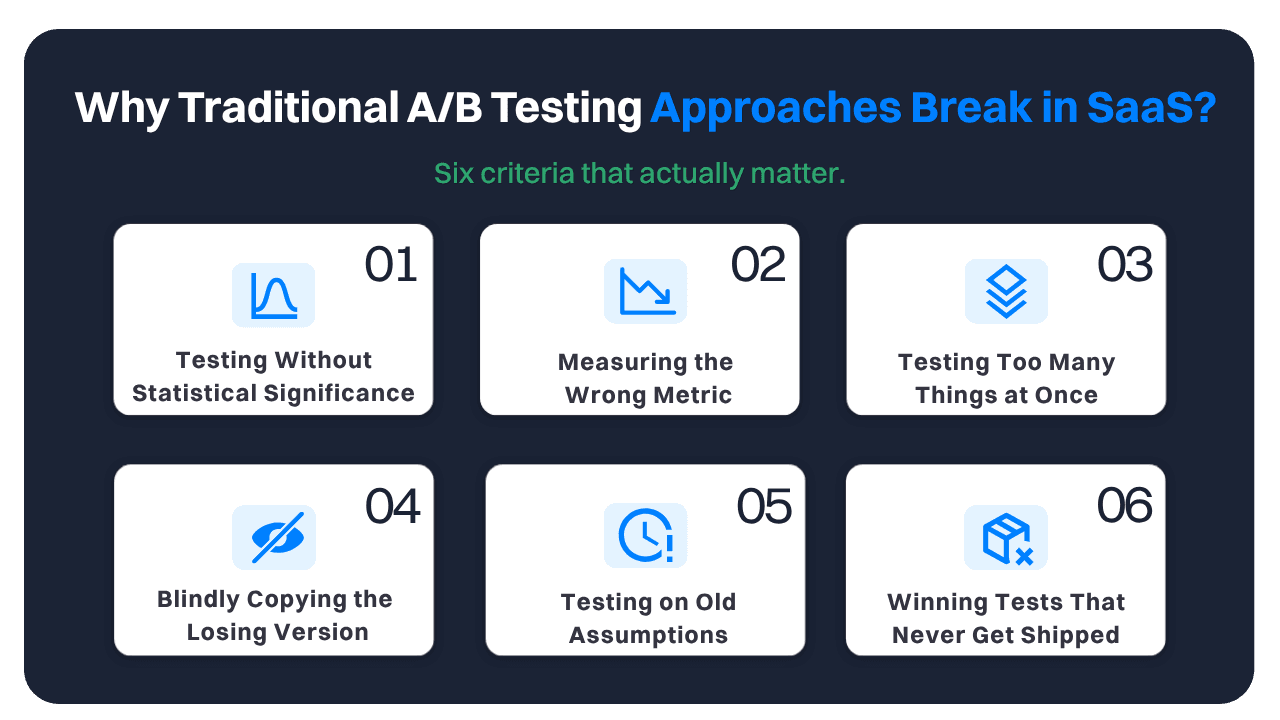

Why Traditional A/B Testing Approaches Break in SaaS?

Most SaaS teams don't have a testing problem. They have a process problem. And there's a difference.

Here's what actually happens at most companies: the growth team launches an experiment because someone had an idea, not because they had a hypothesis. They run it for five days, see a 3% lift, call it significant, and ship.

Meanwhile, the experiment was underpowered, contaminated by a product release mid-test, and measured signups rather than activations. The lift was not real.

1. Running Tests Without Statistical Significance

The standard threshold for a valid AB test is 95% statistical confidence (p-value below 0.05). Most teams don't hit it because they stop tests too early. You see an early positive trend, you get excited, you stop the test.

This is called "peeking," and it's responsible for a huge percentage of false positives in SaaS AB testing. Only 1 in 7 AB tests actually produces a statistically significant result. That's not failure. That's the nature of rigorous experimentation. Calling a winner too early just means you're shipping noise.

2. Measuring the Wrong Metric

A SaaS company runs an AB test on its pricing page CTA. Version B wins with a 22% higher click rate. They ship it. Paid conversions stay flat. Why? Because click rate on a pricing CTA doesn't equal revenue. It equals clicks.

If you're not measuring the metric that actually matters, you're optimizing for the wrong thing. Define your primary success metric before you launch the test, tied directly to a business outcome: activated users, paid conversions, 30-day retention.

3. Testing Too Many Things at Once

This one's quiet but lethal. Your engineering team is shipping new features. Your marketing team is running email campaigns. Your designer updated the UI. All while your AB test is running. Any of those changes can contaminate results.

Concurrent experiments need to be coordinated so you know what caused what. This is why mature SaaS companies like Spotify run 1,000+ concurrent experiments with dedicated infrastructure, not just a spreadsheet.

4. Blindly Copying the Losing Version

Most SaaS teams build their testing roadmap by borrowing from competitors, calling what they see a "best practice," and running their own version of it. Casey Hill's analysis on Growth Unhinged, drawing from over 25,000 AB tests, found only 10% of tests tied to revenue beat their controls.

The "one CTA in the hero" rule is a textbook case: head-to-head tests from Slack, Shopify, Intercom, and Loom have repeatedly shown two CTAs outperforming one. That's not a best practice. That's a hypothesis someone else already tested and lost.

5. Testing on Old Assumptions

Testing playbooks from 2020 don't hold in 2026. "Simple backgrounds convert better" was gospel until Canva ran a split test where an illustrated background won outright, and Tom Orbach of the Marketing Ideas newsletter documented the same with a 25% lift for MineOS and 94% for MyCase.

Nearly 30% of the top 100 B2B SaaS brands now default to annual pricing displayed as a monthly figure, and Intercom made that switch in 2022. If your testing assumptions haven't been updated in a few years, you're running experiments the market has already moved past.

6. Winning Tests That Never Get Shipped

This one undermines the entire point of a testing program. GoDaddy tested AI-centric hero copy against a non-AI version; the less AI-heavy version appears to have won, and then they shipped full AI messaging across the site anyway. Talkdesk and Prezi followed the same pattern.

When what converts best conflicts with what investors or boards want, internal pressure beats test data. A testing culture only compounds if you actually ship what wins.

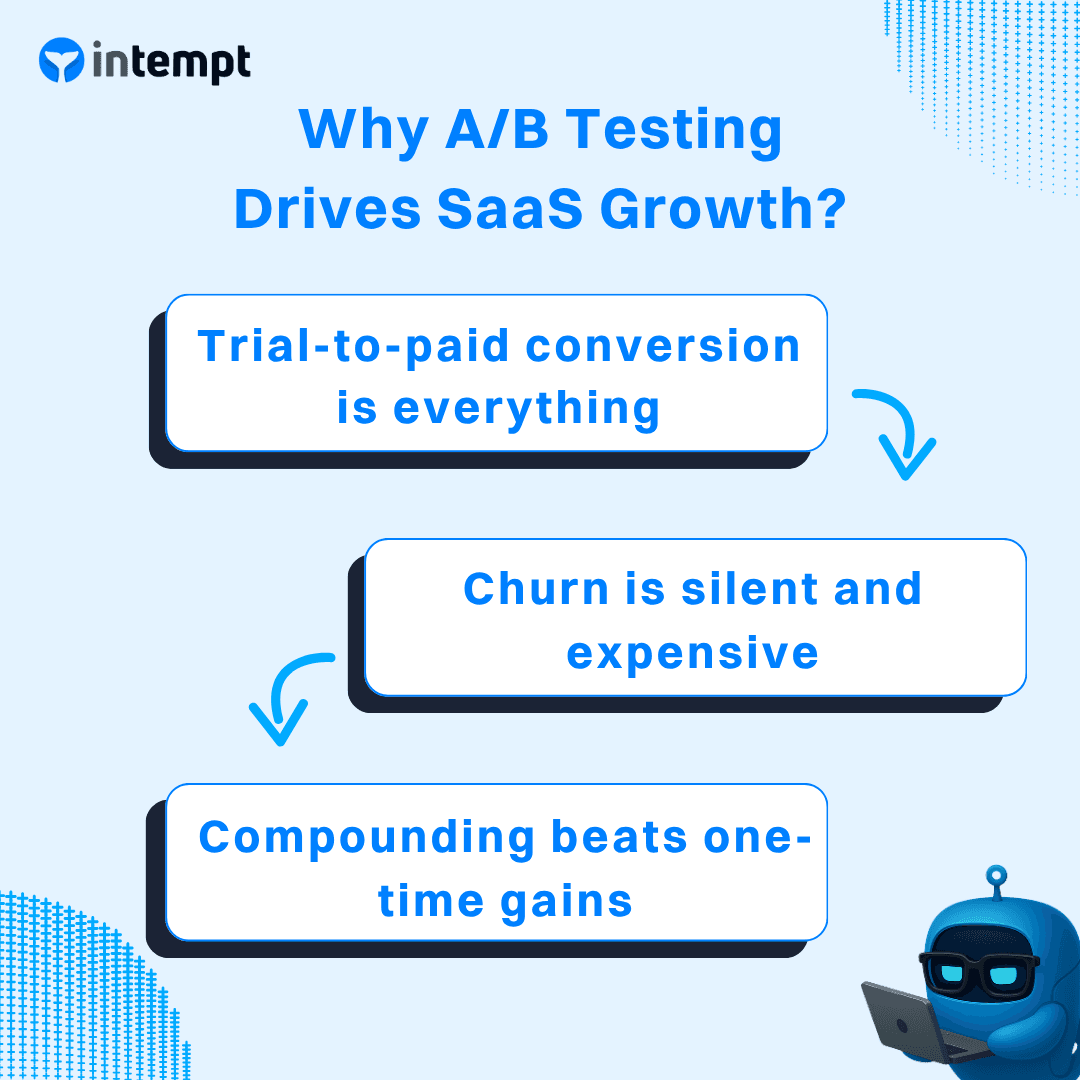

Why A/B Testing Drives SaaS Growth?

The global AB testing market was worth $485M in 2018. By 2025 it crossed $1B. It's projected to hit $4.4B by 2035. That's not hype. That's companies figuring out that data-driven iteration beats expensive redesigns. 77% of organizations now run AB tests. And the gap between companies that test well and those that don't is growing.

Here's why testing moves the needle specifically for SaaS:

1. Trial-to-paid conversion is everything. In SaaS, CAC is fixed. Every improvement to trial conversion drops straight to the bottom line without adding acquisition cost. A 10% improvement in trial-to-paid conversion on a 1,000-trial/month product at $99/month is $9,900 in new MRR. Monthly. AB testing is the fastest, lowest-risk way to find those improvements.

2. Churn is silent and expensive. Most SaaS churn isn't driven by pricing or competition. It's driven by users not getting value fast enough. AB testing your onboarding flow, feature discovery prompts, and lifecycle messaging directly attacks the root cause of churn before it shows up in your metrics.

3. Compounding beats one-time gains. A team running 10+ tests per month doesn't just get one win. They get compounding wins. Each improvement to onboarding makes the next upgrade nudge test more effective. Each pricing page win makes the trial CTA test more meaningful. Google runs over 10,000 AB tests per year. That's not because they need to. It's because they understand that systematic iteration at scale creates advantages that can't be replicated.

Common A/B Testing Scenarios in SaaS

Not "test your button color." These are the scenarios that actually move revenue.

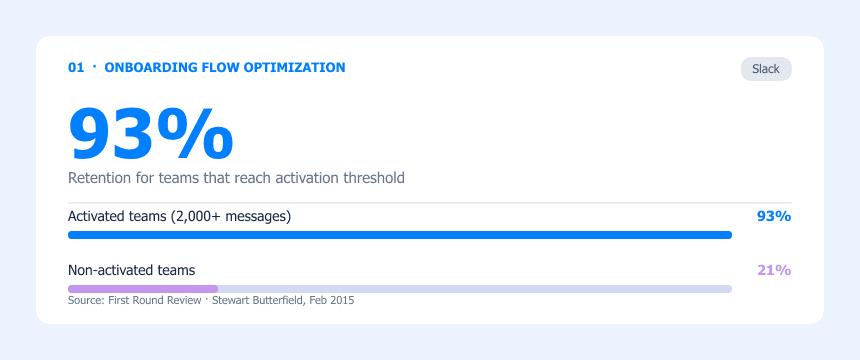

1. Onboarding Flow Optimization

Onboarding is the most important test surface in SaaS. It determines whether a user reaches their first value moment before losing interest, and everything downstream flows from it: activation rate, 30-day retention, and trial-to-paid conversion.

The job of onboarding AB testing is to reduce the distance between "just signed up" and "I get why this product exists." Teams test the number of steps before a user experiences core functionality, form length at signup, the sequence of in-app prompts, what information they ask for upfront versus later, and what the first meaningful action in the product is. Even small friction increases at signup compound into significant activation drops downstream.

Real Example: Slack

Slack AB-tested their onboarding relentlessly with one specific goal: get new teams to their activation threshold faster. Through systematic experimentation, Slack identified that teams that exchanged 2,000 messages in their history had truly tried the product. The retention impact of reaching that threshold was dramatic.

As Stewart Butterfield stated directly in a First Round Review interview (February 2015): "Regardless of any other factor, after 2,000 messages, 93% of those customers are still using Slack today." Teams that didn't reach this activation point retained at a fraction of that rate.

This is exactly what onboarding AB testing is for. Not testing button colors, but finding the specific behavioral milestone that predicts long-term retention, then testing every element of the onboarding flow to get users there faster. Slack's entire onboarding experimentation program was organized around closing the gap between signup and that 2,000-message threshold.

2. Pricing Page Experimentation

Your pricing page is your highest-leverage conversion page. Most SaaS teams treat it as a design problem. The teams pulling ahead treat it as their most important ongoing experiment.

The variables that matter most in pricing page testing are: how plan tiers are named and differentiated, where the CTA is positioned, whether anchor pricing is visible (showing a higher-priced plan first to make mid-tier plans look reasonable), how the value proposition is framed per tier, and whether social proof appears above or below the pricing table. Cosmetic changes rarely move the needle here. Testing the underlying logic of how value is communicated does.

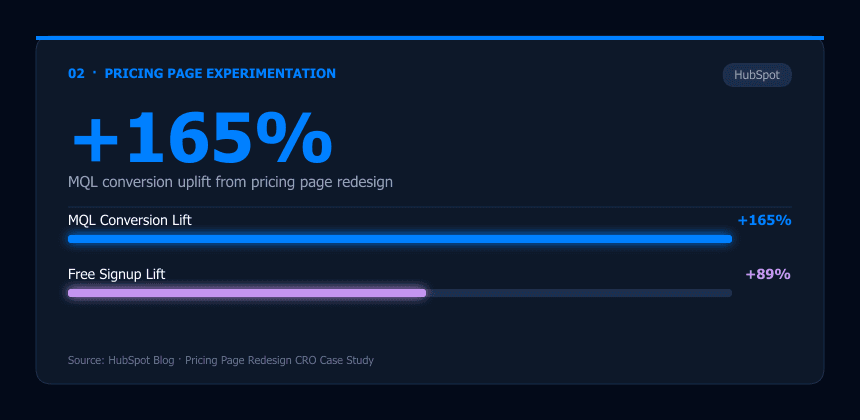

Real Example: HubSpot

HubSpot AB tested a full pricing page redesign, not just visual tweaks. The variant focused on clearer plan differentiation and stronger value proposition framing per tier. The result: +165% in MQL conversions and +89% in free signups.

That's not a headline copy test. That's rethinking what information a buyer needs to make a decision, and then testing whether that framing actually converts better. The scale of the result reflects the scale of the change being tested.

3. Trial-to-Paid Upgrade Nudges

In SaaS, CAC is fixed. Every improvement to trial-to-paid conversion drops straight to MRR without adding acquisition cost. That's why upgrade nudge testing is one of the highest-ROI experiments a growth team can run.

The key variables are: when the nudge fires (contextual, triggered by a feature limit hit, vs. proactive, day-7 email regardless of usage), what it offers (discount, feature unlock, extended trial, or urgency framing), and which channel delivers it (in-app modal, email, or both). Contextual nudges consistently outperform scheduled sends because the user is already feeling the value gap when the message arrives.

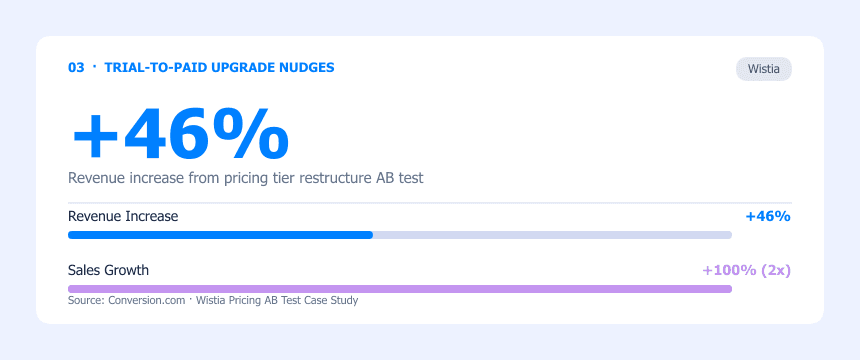

Real Example: Wistia

Wistia ran 150+ AB tests over three years on their pricing structure and upgrade mechanics. Their most significant test restructured their pricing model entirely, moving from feature-gating to limiting the number of videos per tier. This is a hypothesis-level test on the upgrade trigger itself, not just the message.

The result: +46% revenue and 2x sales while cutting ad spend by 88%. The experiment proved that how you set up the upgrade trigger, the point at which a user genuinely feels constrained by their current tier, matters far more than the copy of the upgrade CTA.

4. Churn Reduction and Cancel Flow Testing

Your cancel flow is a conversion page. It just converts in the opposite direction. Most SaaS companies treat it as a legal requirement, not a growth surface. That's a significant missed opportunity.

When a user initiates cancellation, they're already in motion toward leaving, but they haven't left yet. A well-designed cancel flow AB test presents relevant interventions at exactly this moment: offering a pause option (for users canceling due to temporary inactivity), a downgrade path (for users canceling due to price), targeted help (for users canceling due to lack of value), or a discount (as a last resort). The critical AB test is which intervention, presented to which user segment, produces the best retention outcome. One-size-fits-all cancel flows perform significantly worse than segmented ones.

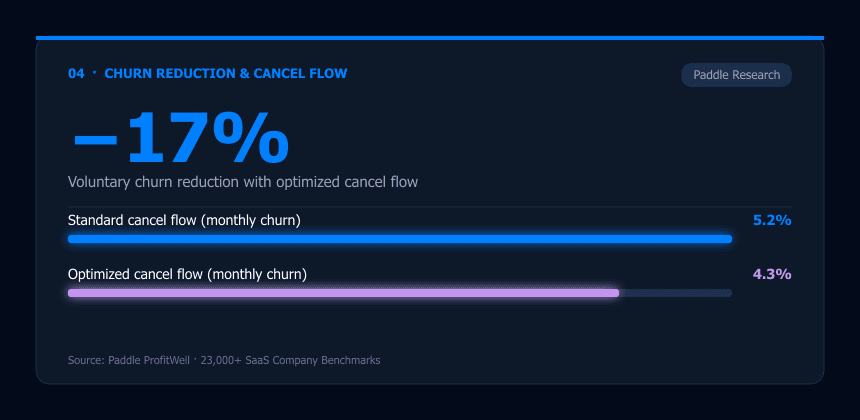

Industry Research: Paddle (ProfitWell)

Paddle, which powers billing for thousands of SaaS companies through its ProfitWell analytics product, analyzed cancellation data across 23,000+ subscription businesses. Their benchmarks show that companies with AB-tested, segmented cancel flows reduce voluntary monthly churn by an average of 17% compared to companies using standard immediate-cancellation flows, dropping from approximately 5.2% to 4.3% monthly churn.

The finding that consistently holds: showing a pause option to users who haven't logged in recently, rather than a generic retention offer, produces the highest save rates in this specific segment. Behavioral segmentation in the cancel flow is the variable most teams haven't tested yet.

5. In-App Messaging and Activation

In-app messaging drives activation, feature adoption, and upgrade behavior. But most teams set up messages based on time (day 3, day 7, day 14) rather than behavior. The result is messages that arrive when the user is not in the right context to act on them.

AB testing in-app messaging focuses on three variables: trigger condition (time-based vs. behavior-triggered), message format (chat bubble vs. modal vs. banner vs. tooltip), and content framing (what to do next vs. what you're missing vs. what other users like you do). Behavior-triggered messages consistently outperform time-based ones because they reach users at the exact moment the message is relevant.

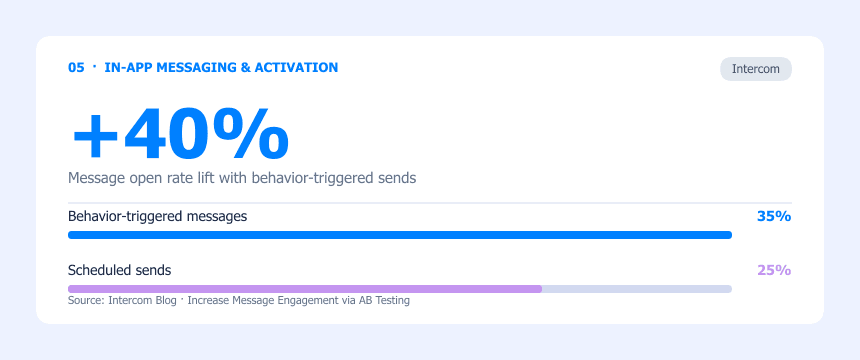

Real Example: Intercom

Intercom AB tested message timing in their own activation sequences: messages triggered immediately upon a specific user action versus messages sent on a scheduled cadence. The immediate-trigger variant produced a 35% open rate versus 25% for scheduled sends, a 40% improvement, with 2x the click-through rate.

They also documented a test where changing the activation message trigger condition alone produced a +50% activation rate improvement. No copy changes. No redesign. Just hitting the user at the right moment in their session rather than at an arbitrary time.

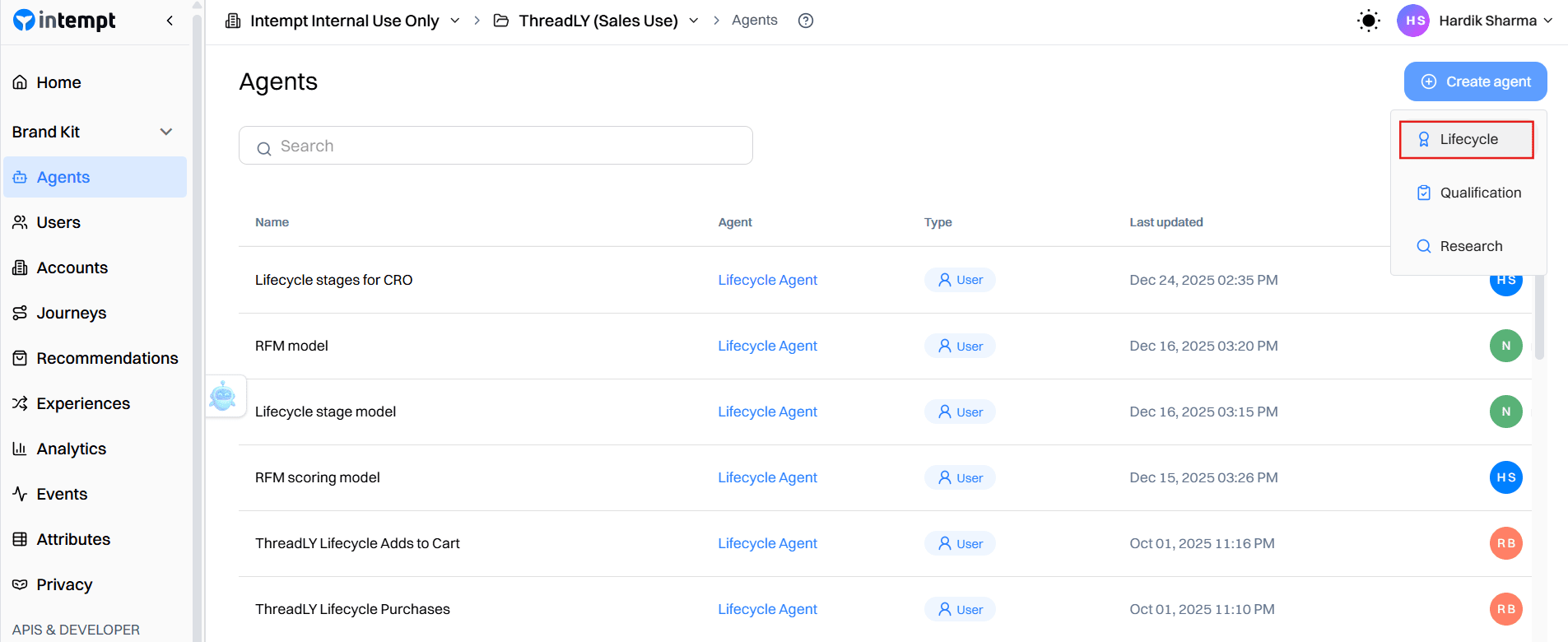

Get Your A/B Tests Right with Intempt

The biggest problem with most A/B testing setups is not the test itself, but who ends up in it. A first-time visitor and a loyal customer of 3 years land in the same variant; the results are averaged together, and you ship a change based on that. The data looked clean. The audience was not. You optimized for a mix of people who behave completely differently, and the "winning" variant may have actually hurt your best customers while slightly lifting everyone else.

Intempt fixes this by running experiments, on-site experiences, and recommendations off a single unified profile. So when a test wins, it doesn't just change a headline. It triggers the right experience for the right person, updates recommendation logic, and feeds directly into the next experiment. The loop actually closes.

Here's how to run your first SaaS AB test with Intempt:

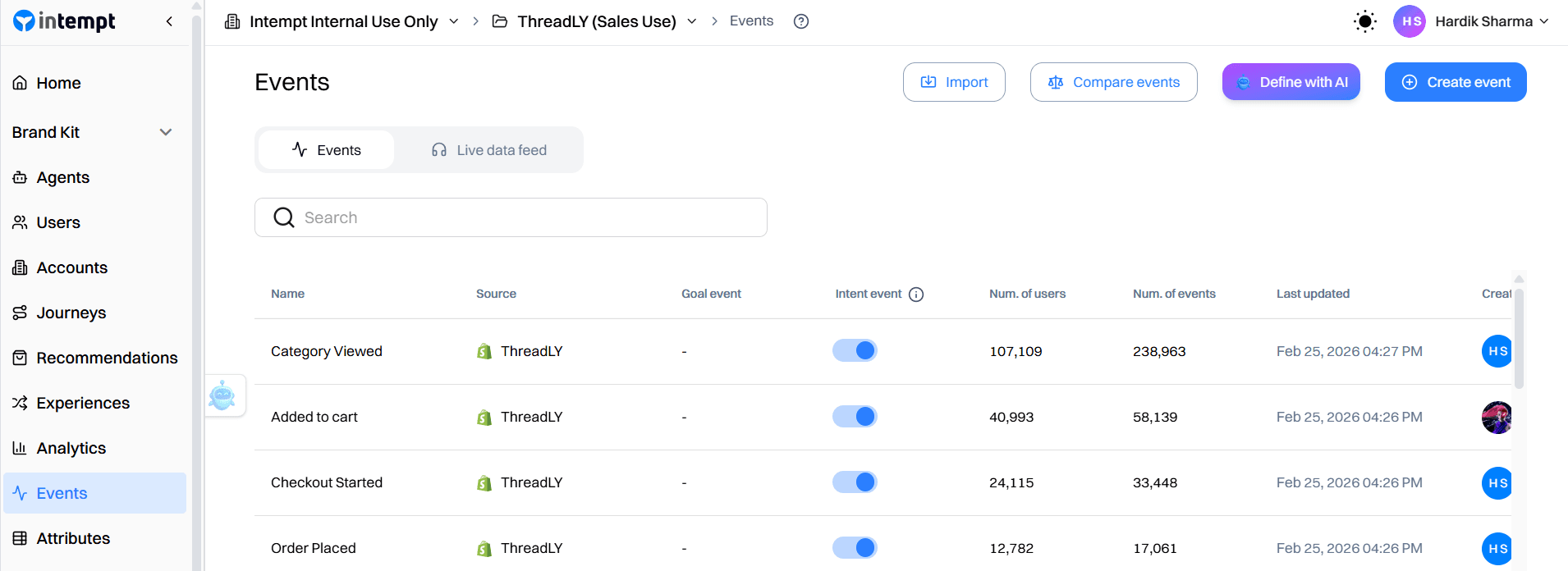

Step 1: Connect your data sources.

Before anything else, define and track the events that represent meaningful user actions in your product. Think of each event as a real-world action tied to a timestamp: when it happened and who did it.

Start with these for SaaS:

- Onboarding: trial_started, onboarding_completed, invite_sent, integration_connected

- Activation: feature_activated, session_started, key_action_completed

- Revenue: upgrade_clicked, plan_upgraded, subscription_renewed, cancel_initiated

These events are what Intempt uses to build segments, assign variants, and measure whether your test moved real behavior downstream, not just clicks.

Step 2: Define your audience segment.

Go to Segments and create the audience for your experiment. Intempt builds segments off the live event stream, so they update in real time after every new event. The moment a user's behavior shifts, they move automatically. No manual exports, no stale lists.

Build one segment per test you want to run:

| What you're testing | Segment definition |

|---|---|

| Onboarding upgrade nudge | trial_started fired, plan_upgraded not fired, active in last 7-14 days |

| Pricing page CTA | Visited /pricing, plan_upgraded not fired |

| Cancel flow intervention | cancel_initiated fired in the last 7 days |

| In-app activation message | 3+ sessions, feature_activated not fired |

| Trial expiry upgrade | trial_started fired 25+ days ago, plan_upgraded not fired |

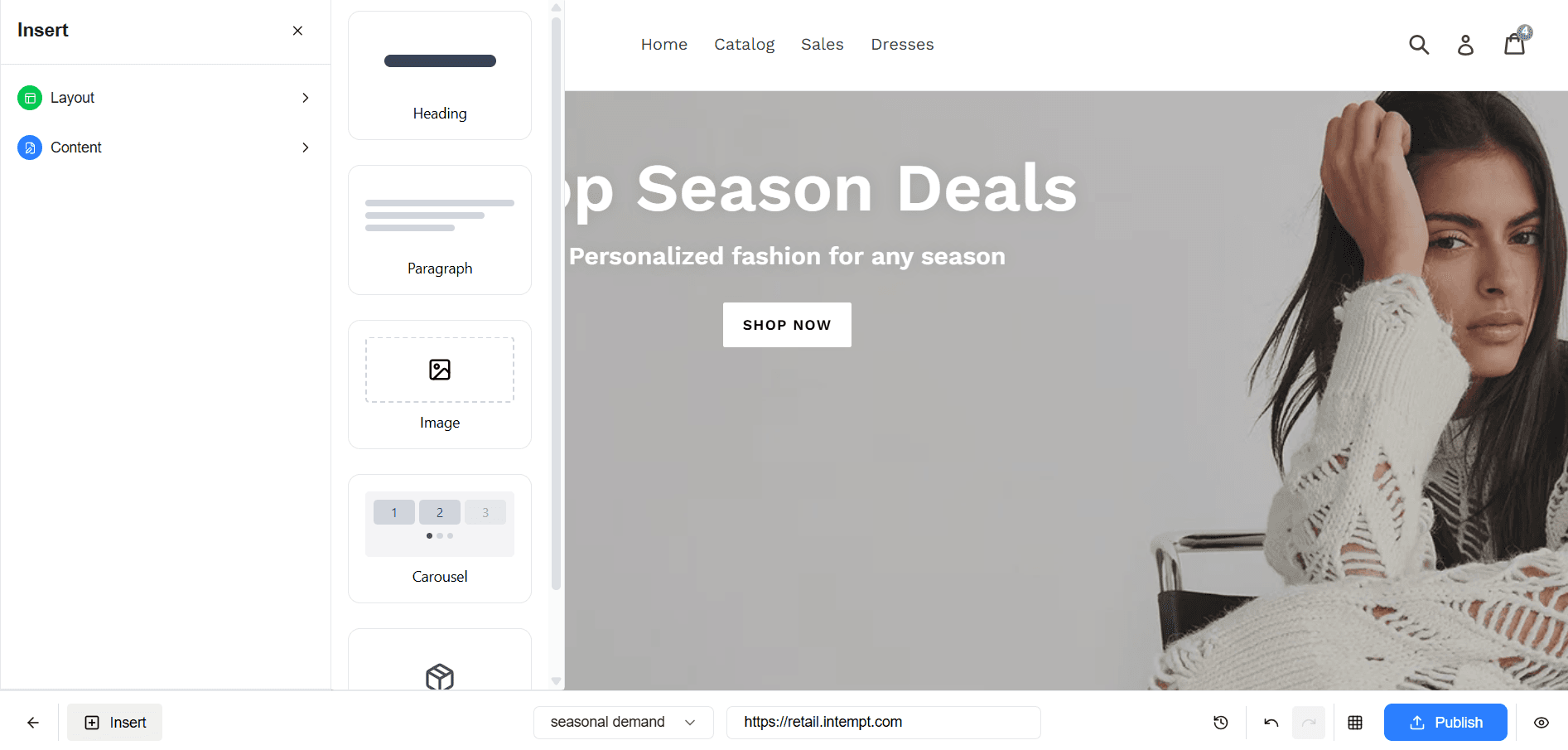

Step 3: Set up your personalized experiment.

Go to Experiences, then Create Experience, then Web. This is where you define what each segment actually sees, with no engineering required.

Here's what to configure per scenario:

- Onboarding nudge: Show an in-app banner on the dashboard ("You're 3 steps from getting full value"). Set the audience to your onboarding segment, display condition to "Always," and primary metric to onboarding_completed.

- Pricing page CTA: Swap the CTA copy from "Start Free Trial" to "See It in Action." Target all /pricing visitors excluding paid users. Set primary metric to plan_upgraded within 14 days.

- Cancel flow: Replace the immediate cancel confirmation with a pause option ("Take a break instead?"). Set display to "Once," target the cancel_initiated segment, and track subscription_renewed as your primary metric.

- In-app activation: Trigger a contextual tooltip or modal when a user opens a feature they haven't activated yet. Set the audience to users with 3+ sessions and feature_activated not fired. Track feature_activated as the primary metric.

- Trial expiry: Show a time-limited upgrade offer 3 days before the trial ends. Set a 5% control group to measure true lift. Track plan_upgraded and set conversion window to 7 days.

Assign a 50/50 traffic split for each test. Set your experiment duration based on your calculated sample size before launch. Do not change it after the test starts.

Step 4: Define your success metric.

This is the most important step. Connect each experiment to a downstream SaaS metric, not an immediate action. Clicks on a CTA are not a success metric. Paid conversions within 14 days are.

Intempt's native revenue tracking via Stripe, HubSpot, and Shopify means you can tie variant performance directly to MRR without stitching together separate tools.

Set your primary metric to the event that actually reflects business outcome: plan_upgraded, onboarding_completed, or subscription_renewed. Set 95% statistical significance as your threshold before you launch.

Step 5: Launch and monitor without peeking.

Click Start. Intempt serves each variant in real time with no page lag. Do not check results daily. The platform flags when your experiment reaches 95% significance so you're not making premature calls based on early noise.

If you stop early because results look positive, you're shipping a false positive, not a winner.

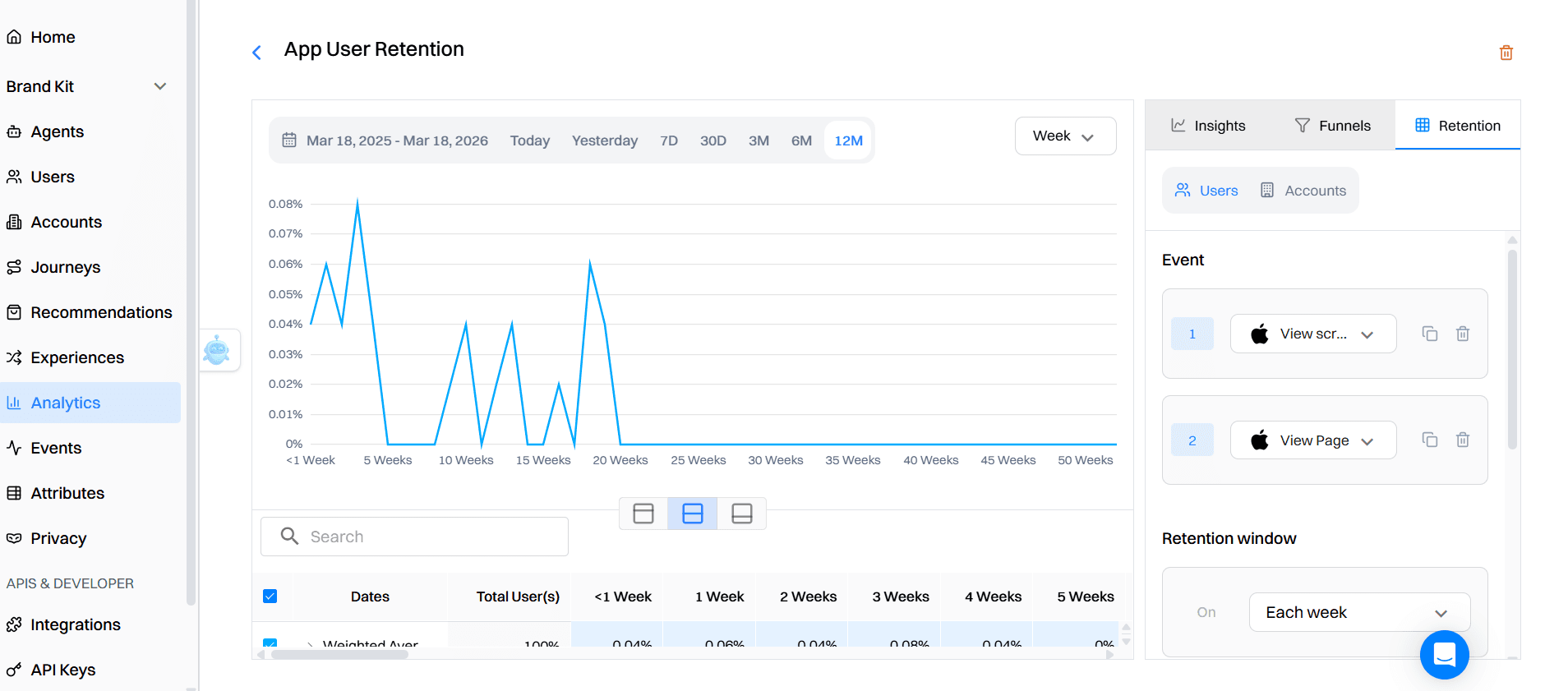

Step 6: Analyze, segment, and iterate.

Once significance is reached, go to your experiment's analytics view. Track performance across all active variants:

- Unique views: how many users saw each variant

- Conversion rate: percentage who completed the goal event

- Conversion value: total revenue driven by each variant using the amount property on your upgrade or renewal event

- Lift: how much the variant outperformed the control group

For SaaS specifically, go beyond conversion rate:

- Trial-to-paid rate by variant: did the winning variant actually improve paid conversions, or just clicks?

- 30-day activation by variant: are users who saw the winning onboarding variant more activated a month later?

- MRR impact per experiment: is the revenue from upgraded users in the variant higher than the control?

- Churn delta by cohort: is the 90-day retention rate trending up for users who went through the winning experience?

Compare daily versus cumulative views in the chart to identify whether performance is improving over time or plateauing. That's your signal to iterate or move on.

Best Practices for SaaS A/B Testing

| # | Best Practice | Why It Matters |

|---|---|---|

| 1 | Define your success metric before launch | Lock in one primary metric tied to a business outcome (paid conversions, 30-day activation, retention) before you see a single data point. Choosing metrics after you see results is how you cherry-pick winners. |

| 2 | Calculate the sample size before you start | Use a sample size calculator. Most SaaS AB tests need 1,000–5,000 unique users per variant. If your traffic can't support that in a reasonable timeframe, redesign the experiment, don't shrink the threshold. |

| 3 | Test one variable at a time | If you change headline copy, button color, and layout simultaneously, you will never know what caused the result. One change per test. Clean causality is the whole point. |

| 4 | Measure downstream SaaS metrics, not just clicks | A 20% lift in trial signups means nothing if activation drops. Track 30-day retention, trial-to-paid conversion, and MRR impact, not just the immediate conversion point. |

| 5 | Segment your results after the test | Your aggregate result hides the story. Did mobile users respond differently? Users from organic vs. paid? Power users vs. new signups? Segmented analysis reveals the real optimization opportunity. |

| 6 | Protect power users from experiment fatigue | Don't run five UI tests simultaneously on your most engaged segment. Cap experiment exposure per user cohort and time period. Power users who feel like guinea pigs churn quietly. |

| 7 | Document every test, wins and losses both | A losing test is still information. The compounding advantage that companies like Booking.com have comes from institutional knowledge built test by test over years. |

| 8 | Match test priority to your growth stage | Early-stage: focus on onboarding and activation. Mid-stage: trial-to-paid conversion and pricing. Mature: retention, expansion revenue, and referral mechanics. Don't test pricing before you've fixed your onboarding. |

Bottom Line

AB testing is how fast-growing SaaS companies make confident decisions without betting the roadmap on gut feelings. The companies pulling ahead (Slack, HubSpot, Wistia, Intercom) aren't running more experiments because they have bigger teams.

They're running better experiments because they've built the right infrastructure and process around testing.

The honest tradeoff: real AB testing requires patience. Two-week minimum test durations, 95% significance thresholds, and downstream metric tracking feel slow when you're used to shipping fast.

But the alternative is faster decisions with lower accuracy. You'll ship more changes and see less improvement. That's a worse tradeoff.

If you want to start now, pick one experiment. Your onboarding flow is almost always the highest-leverage place to begin. Form a clear hypothesis, define one downstream success metric, calculate your sample size, and run it properly.

Then document the result regardless of outcome. That institutional knowledge, accumulated test by test, is the actual competitive advantage.

TL;DR

- AB testing for SaaS isn't about button colors. It's about systematically optimizing trial conversion, onboarding activation, upgrade triggers, and churn reduction.

- Only 1 in 7 AB tests produces a statistically significant result. That's not failure. That's what rigorous experimentation looks like.

- Companies running 10+ tests per month grow revenue 2.1x faster than those running just two.

- Real examples: Slack's onboarding optimization produced 93% retention for activated teams. HubSpot's pricing page AB test drove +165% MQL conversions. Wistia's pricing tier restructure delivered +46% revenue. Intercom's behavior-triggered messages hit 35% open rates vs. 25% for scheduled sends.

- The three most common mistakes: peeking at results early, measuring the wrong metric, and running experiments without statistical significance requirements.

- Downstream metrics (30-day activation, paid conversions, 90-day retention) matter more than immediate click rates.

- Intempt's personalized experiments module connects experiment data, user profiles, and revenue tracking in one platform so you're measuring real business outcomes, not just surface conversions.

- Always start with a hypothesis, define your success metric before launch, and run tests for at least two full weeks.

Frequently asked questions. Answered.

For most SaaS AB tests, you need a minimum of 1,000 to 5,000 unique users per variant to reach statistical significance. The exact number depends on your baseline conversion rate and how large an improvement you need to detect. Lower baseline conversion rates require larger sample sizes. Use a sample size calculator before launching any experiment, and don't cut the test short when you see early positive trends.

Run every AB test for a minimum of two full weeks regardless of early results. This eliminates day-of-week bias, since SaaS user behavior varies significantly between weekdays and weekends. For lower-traffic pages or features, you may need four to six weeks to reach a sufficient sample size. Stopping early is the single most common cause of false positives in SaaS AB testing.

Define your primary success metric before launching the test, tied to a business outcome like 30-day activation rate, trial-to-paid conversion, or 90-day retention. Avoid using surface metrics like click rate or signup rate as your primary measure unless they're directly tied to revenue. Tools like Intempt let you connect experiment variants to downstream revenue metrics like MRR and paid conversions so you're tracking what actually matters.

In eCommerce, you're optimizing for a single transaction. In SaaS, you're optimizing a lifecycle that spans onboarding, activation, retention, and expansion. A change that improves free signups can still lose if it reduces activation rates downstream. SaaS AB tests need longer measurement windows and must track lifecycle metrics, not just top-of-funnel conversions.

AB testing changes one variable and compares two versions. Multivariate testing changes multiple variables simultaneously to find the best combination. For most SaaS companies, AB testing is the right starting point because it produces cleaner causal data and requires less traffic. Multivariate testing needs 50,000+ monthly visitors per page to be statistically valid and is best suited for high-traffic pages like your homepage or pricing page.

Yes. Intempt runs personalized experiments across the entire SaaS lifecycle including onboarding sequences, in-app upgrade nudges, lifecycle email timing, and cancel flow interventions. Because Intempt combines a CDP with behavioral analytics and marketing automation, each experiment is tracked against downstream metrics like 30-day retention and paid conversions, not just immediate clicks. This makes it useful for the high-leverage, post-signup experiments that move MRR.

Peeking at results and stopping tests early when they see a positive trend. This produces false positives at a high rate because early data is statistically noisy. Set your significance threshold at 95%, calculate your required sample size before launching, and commit to running the full duration. The second most common mistake is measuring immediate conversions instead of downstream SaaS metrics like activation and retention.

Start with your onboarding flow. It's the highest-leverage point in the SaaS lifecycle because it determines whether users reach their first value moment before they lose interest. Specifically, test the number of steps required before a user experiences core product value, the phrasing of your activation prompts, and the timing of your first meaningful in-app communication. These experiments consistently produce the largest downstream improvements to trial-to-paid conversion and 30-day retention.

About the author

Hardik Sharma

Content Writer

Hardik researches and writes about marketing tools, sales technology, and customer engagement platforms. His work helps teams evaluate software with honest feature and pricing breakdowns.

LinkedIn